Alessandro Favero

I’m an inaugural Physics-AI Fellow at the University of Cambridge and the Sansom Research Associate at Emmanuel College. I’m also part of the interpretability team at Polymathic AI.

I work on the physics of learning and neural computation. My research combines theory and empirics to advance our fundamental understanding of AI, with a focus on the representations deep neural networks acquire – of language, images, scientific data, and tasks. Recent topics include:

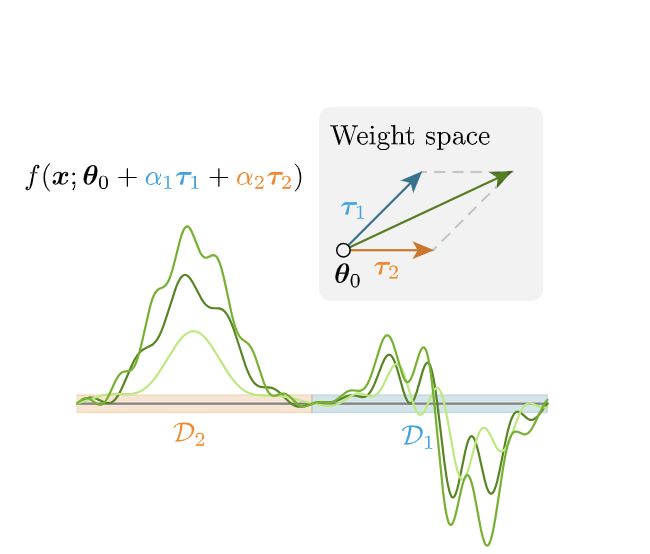

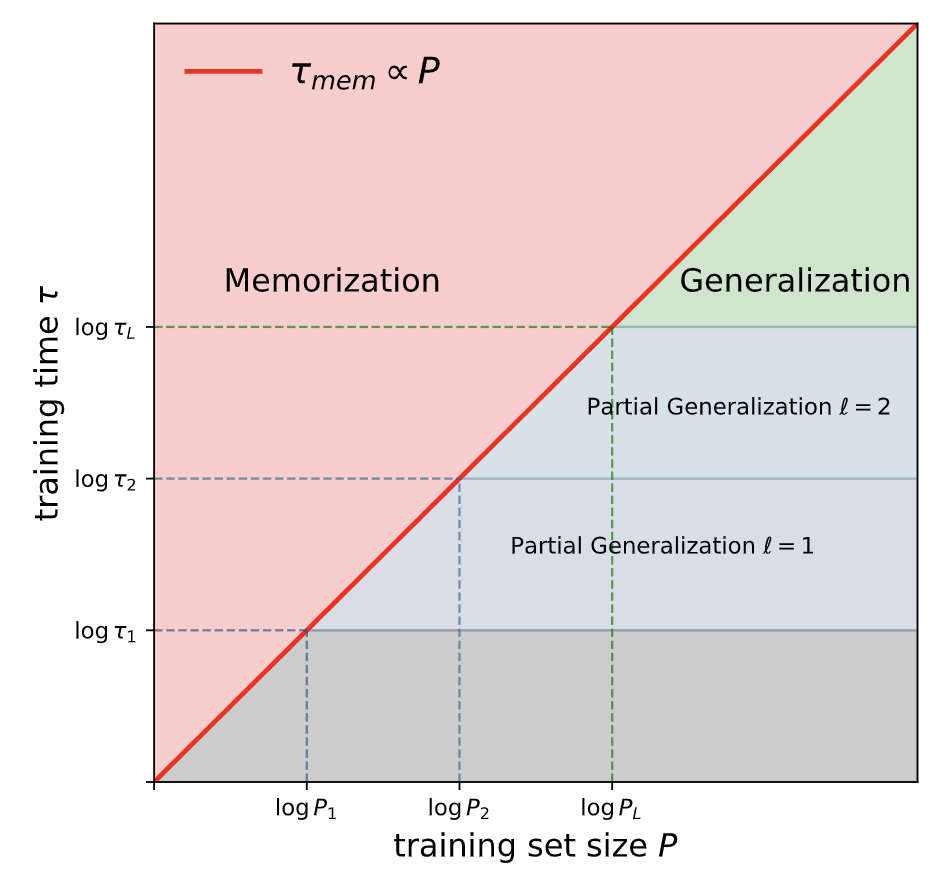

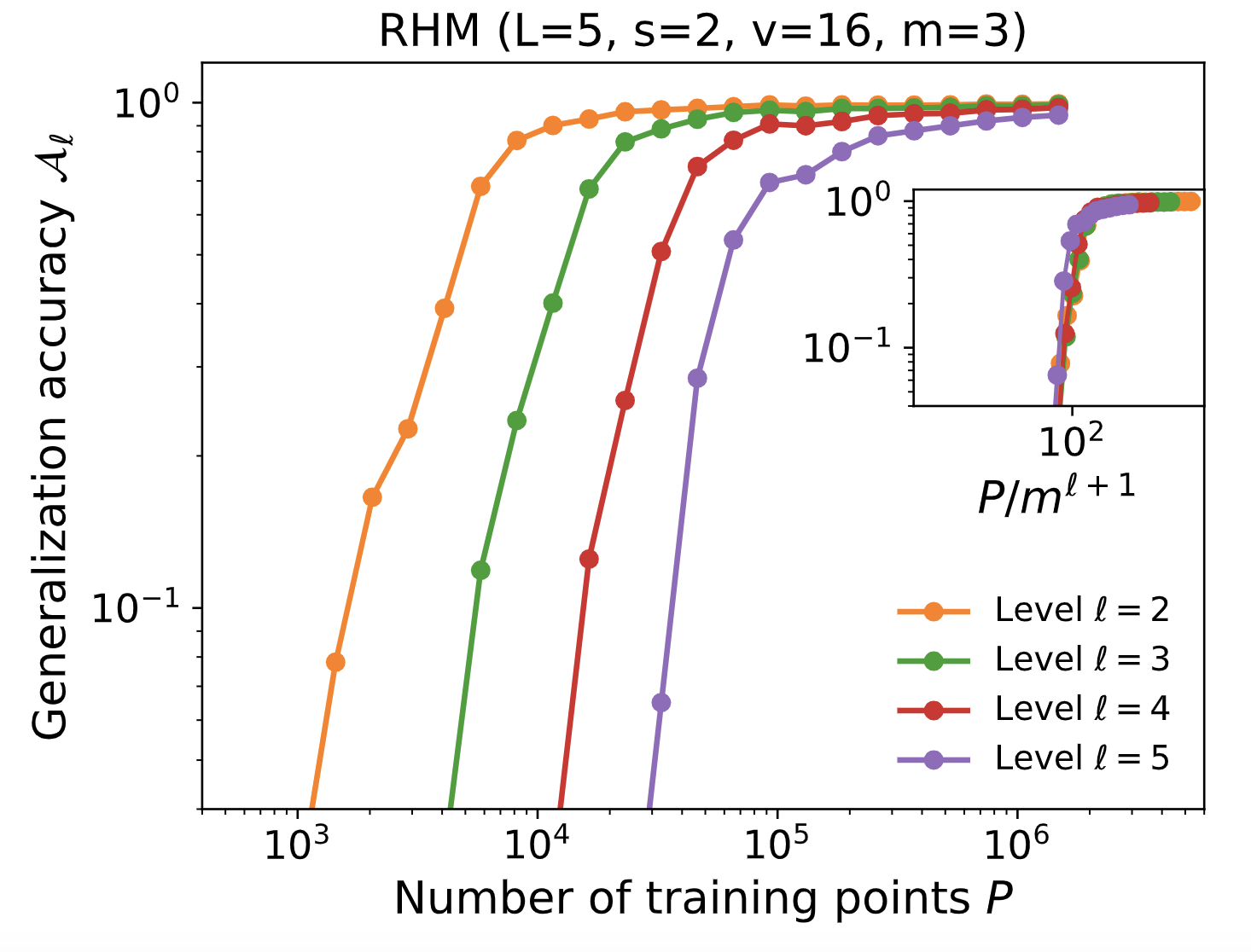

- memorization, generalization, and compositionality in generative models

- formal grammars and the structure of language

- weight-space geometry of foundation models

Before Cambridge I did my PhD at EPFL with Matthieu Wyart and Pascal Frossard, with a summer at Amazon’s AI Labs in Stefano Soatto’s group working on multimodal LLMs. Earlier, I studied physics in Turin, Trieste, and Paris.

I’m always happy to hear from prospective students interested in the science of deep learning. Email volume is high, so a short note explaining your background and what specifically draws you to my work helps me get back faster.

news

| May 11, 2026 | Speaking at the first FLARE workshop at EPFL bridging physics, linguistics & neuroscience of LLMs. Up next, Machine Learning Physics in Okinawa and Youth in High Dimensions at ICTP Trieste in July. |

|---|---|

| May 05, 2026 | Gave a talk on Editing AI Minds at the Infosys-Cambridge AI Industry Symposium, a day connecting academic and industry perspectives on where AI is heading. |

| Nov 05, 2025 | Visiting JHU for a seminar on diffusion models, then a talk at the Flatiron Institute’s CCM in NYC, and finally Stanford for the kickoff workshop of the Simons Collaboration on the Physics of Learning. |

| Sep 25, 2025 | My PhD thesis has been awarded the G-Research EPFL PhD prize in maths and data science. Many thanks to G-Research for this honor. |

| Apr 08, 2025 | I gave a talk at the Perimeter Institute for Theoretical Physics on my research into creativity and compositionality in diffusion models. |

selected publications

-

The physics of data and tasks: Theories of locality and compositionality in deep learningPhD Dissertation, École Polytechnique Fédérale de Lausanne (EPFL), 2025

The physics of data and tasks: Theories of locality and compositionality in deep learningPhD Dissertation, École Polytechnique Fédérale de Lausanne (EPFL), 2025 -

Bigger isn’t always memorizing: Early stopping overparameterized diffusion modelsarXiv preprint, 2025

Bigger isn’t always memorizing: Early stopping overparameterized diffusion modelsarXiv preprint, 2025 -

How compositional generalization and creativity improve as diffusion models are trainedInternational Conference on Machine Learning (ICML), PMLR, 2025

How compositional generalization and creativity improve as diffusion models are trainedInternational Conference on Machine Learning (ICML), PMLR, 2025 -

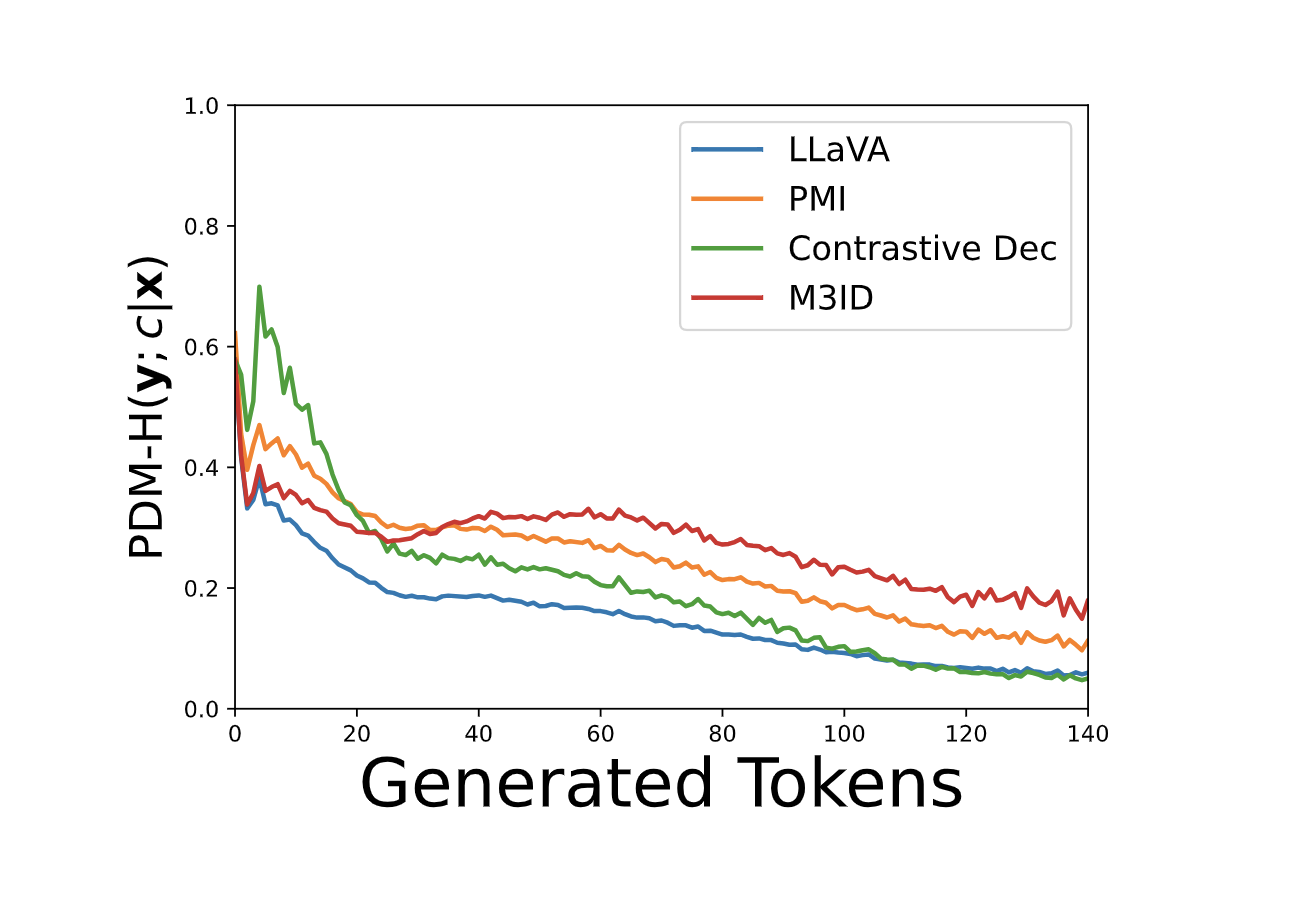

Multi-modal hallucination control by visual information groundingIEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024

Multi-modal hallucination control by visual information groundingIEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024